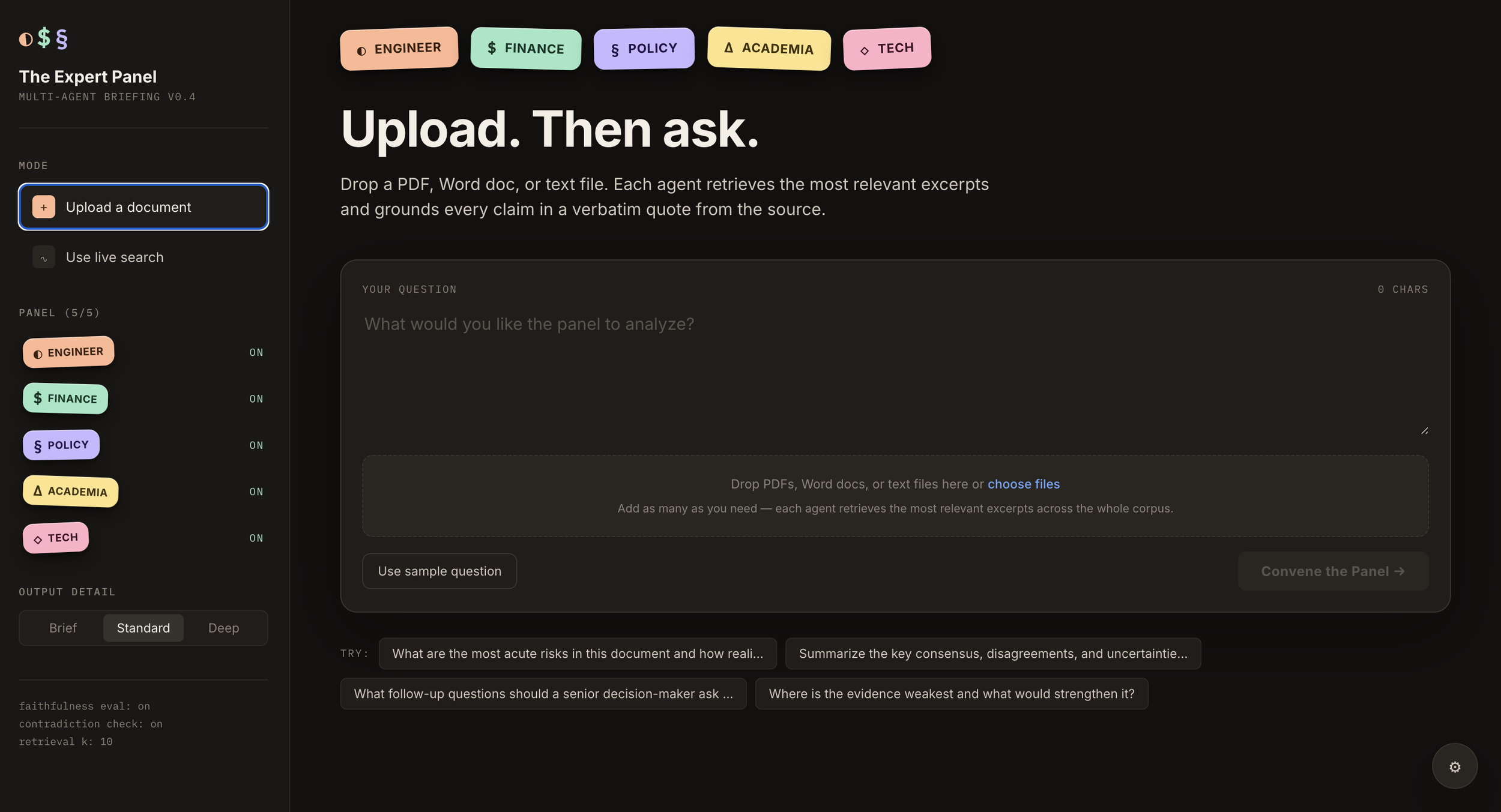

The Expert Panel

Multi-agent briefing tool with per-claim verification.

Why I built it

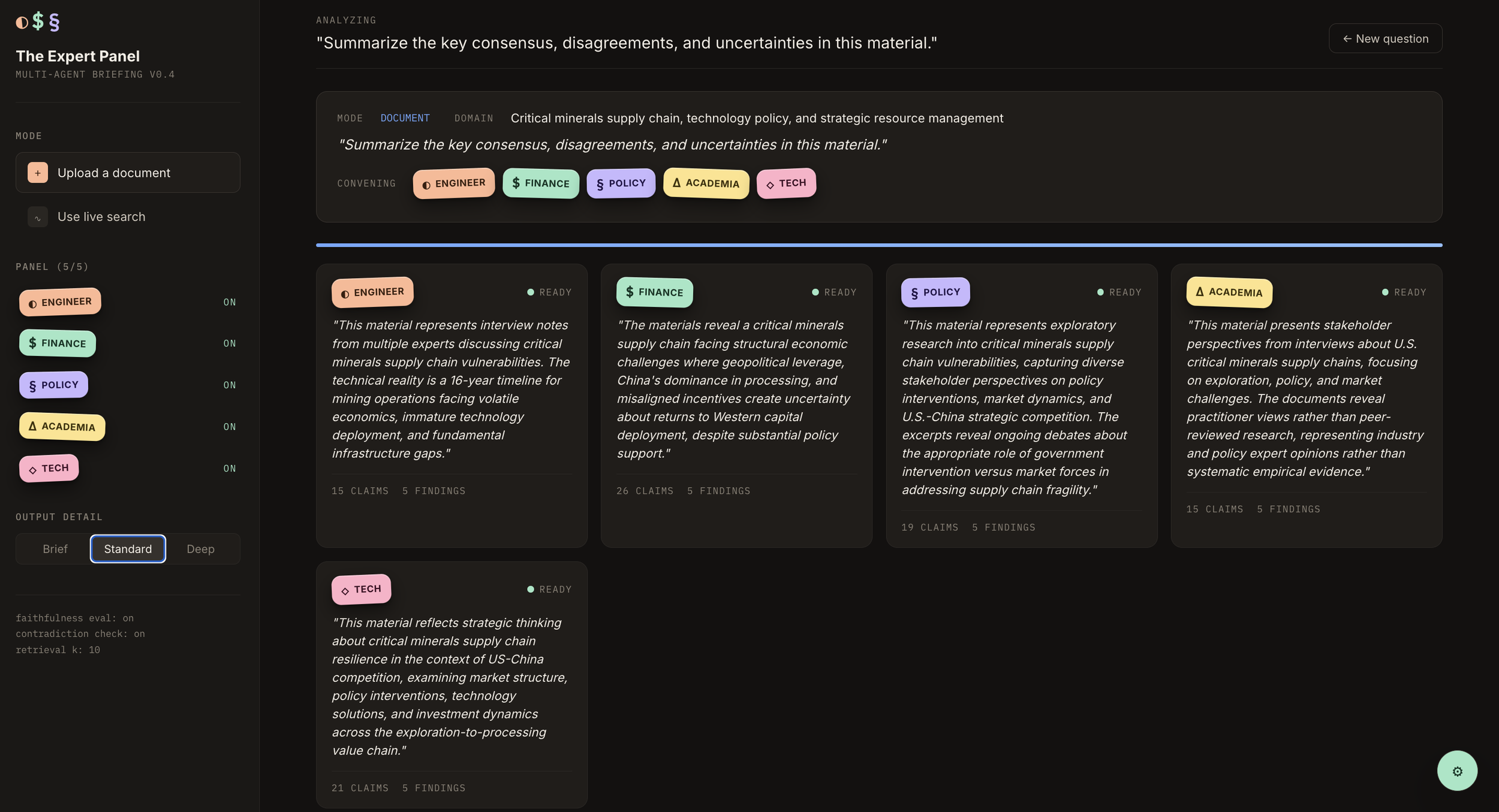

On two prior projects — critical mineral supply chains optimization research at Stanford H4D for In-Q-Tel, and small modular reactor briefings at the National Security Council — I synthesized one to two hundred expert interviews and multi-source research into structured briefings for senior officials. The work is slow and error-prone in a specific way: five lenses (engineering, finance, policy, academia, industry) each need to look at the same body of evidence and disagree productively. Then someone has to flag where they contradict each other, where claims aren't actually supported by the source, and what's being smuggled past as "general knowledge" when it's really speculation.

So I automated it.

How it works

Upload documents — any number, PDFs, Word, plaintext — or run live web research via Perplexity. A router LLM picks which of five specialist agents are relevant to your question. Each retrieves the most relevant excerpts from your corpus via ChromaDB and produces structured findings: a perspective, key claims, verbatim supporting quotes, self-stated confidence, areas of uncertainty, and follow-up questions.

A synthesizer combines all five into one briefing — explicitly preserving disagreement rather than smoothing it over.

Why the eval harness is the point

Every claim every agent makes is independently verified by a separate Claude Sonnet 4.5 judge running a strict five-step procedure: verbatim anchor in the source, fabricated-specifics check (numbers, dates, named entities), arithmetic and aggregation validity, semantic alignment, and a general-knowledge gate that refuses unsupported speculation passed off as fact.

A second pass scans across agents for direct factual contradictions (high / medium / low severity).

A calibration cross-tab compares stated agent confidence ("high", "medium", "low") against measured faithfulness — surfacing whether the panel is overconfident.

In testing, the eval caught failures the synthesizer missed. In one run, three independent agents fabricated a "$2.88 billion total federal funding" figure by adding a fixed dollar appropriation to a percentage tax credit — categorically invalid arithmetic. Without the eval, that claim would have shipped to a decision-maker. With it, the claim was flagged ⚠ in the UI, never silently removed, with the judge's specific objection visible.

Headline faithfulness rates run 90–95% on document-grounded analyses. Unfaithful claims are surfaced with the specific reason, not hidden.

Experimentation along the way

A few decisions I iterated on that ended up mattering:

Eval judge model. Switching from Sonnet to Haiku for speed dropped accuracy enough to miss real fabrications. Reverted to Sonnet, accepted the latency, then rewrote the prompt as a strict five-step procedure that caught more failures than the original loose rubric — going from three flagged errors per test corpus to six. A lower headline faithfulness rate, but a better evaluation.

Token-budget failure mode. The first deploy crashed because one agent's response truncated mid-JSON in the eight-document case. Bumped the output token budget, added a retry that detects stop_reason=max_tokens specifically and asks the agent to be more concise on the second attempt.

PII anonymization, on by default in interview mode and opt-in for documents. Runs locally before any API call — spaCy NER plus a curated public-institution allow-list, so "Department of Energy" and "IAEA" stay verbatim while interviewees and private firms get pseudonymized to Expert_A, Organization_A, etc. Phone numbers and personal emails are also redacted. The de-anonymization map never leaves the host machine.

What's different from a generic LLM

Most chat tools dump everything into context — capping at five to twenty files, no per-claim verification, no audit on the model's stated confidence.

This is RAG-grounded: arbitrary corpus size, retrieval into a fixed context budget, every claim cited back to a specific source file, every claim independently verified by a second model.

Stack

Anthropic Claude API (Sonnet 4.5 across all roles) · Perplexity sonar-pro for live search · ChromaDB + sentence-transformers (all-MiniLM-L6-v2) for retrieval · FastAPI + Server-Sent Events for streaming · React + Vite + TypeScript on the front end · single Cloud Run service with auto-TLS · bring-your-own-key supported · demo mode rate-capped to bound Anthropic spend.